I’ll tell you something that might sound controversial in cloud circles: the best cloud is often more than one cloud.

I’ve worked with dozens of enterprises over the years, and here’s what I’ve noticed. Some started with AWS years ago and built their entire infrastructure there. Then they realized Oracle Autonomous Database or Exadata could dramatically improve their database performance. Others were Oracle shops that wanted to leverage AWS’s machine learning services or global edge network.

The question isn’t really “which cloud is better?” The question is “how do we get the best of both?”

In this article, I’ll walk you through building a practical multi-cloud architecture connecting OCI and AWS. We’ll cover secure networking, data synchronization, identity federation, and the operational realities of running workloads across both platforms.

Why Multi-Cloud Actually Makes Sense

Let me be clear about something. Multi-cloud for its own sake is a terrible idea. It adds complexity, increases operational burden, and creates more things that can break. But multi-cloud for the right reasons? That’s a different story.

Here are legitimate reasons I’ve seen organizations adopt OCI and AWS together:

Database Performance: Oracle Autonomous Database and Exadata Cloud Service are genuinely difficult to match for Oracle workloads. If you’re running complex OLTP or analytics on Oracle, OCI’s database offerings are purpose-built for that.

AWS Ecosystem: AWS has services that simply don’t exist elsewhere. SageMaker for ML, Lambda’s maturity, CloudFront’s global presence, or specialized services like Rekognition and Comprehend.

Vendor Negotiation: Having workloads on multiple clouds gives you negotiating leverage. I’ve seen organizations save millions in licensing by demonstrating they could move workloads.

Acquisition and Mergers: Company A runs on AWS, Company B runs on OCI. Now they’re one company. Multi-cloud by necessity.

Regulatory Requirements: Some industries require data sovereignty or specific compliance certifications that might be easier to achieve with a particular provider in a particular region.

If none of these apply to you, stick with one cloud. Seriously. But if they do, keep reading.

Architecture Overview

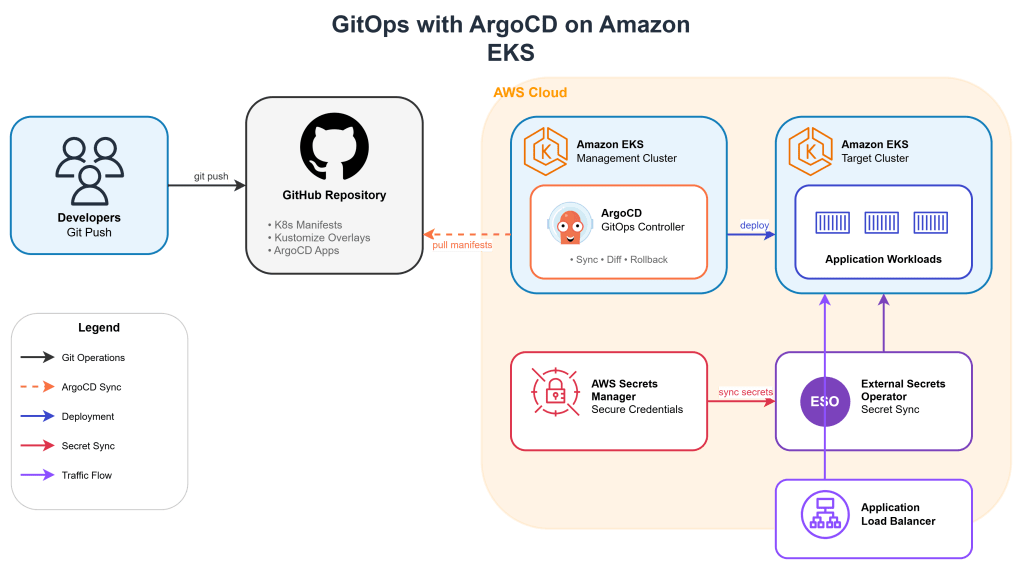

Let’s design a realistic scenario. We have an e-commerce company with:

- Application tier running on AWS (EKS, Lambda, API Gateway)

- Core transactional database on OCI (Autonomous Transaction Processing)

- Data warehouse on OCI (Autonomous Data Warehouse)

- Machine learning workloads on AWS (SageMaker)

- Shared data that needs to flow between both clouds

Setting Up Cross-Cloud Networking

The foundation of any multi-cloud architecture is networking. You need a secure, reliable, and performant connection between clouds.

Option 1: IPSec VPN (Good for Starting Out)

IPSec VPN is the quickest way to connect AWS and OCI. It runs over the public internet but encrypts everything. Good for development, testing, or low-bandwidth production workloads.

On OCI Side:

First, create a Dynamic Routing Gateway (DRG) and attach it to your VCN:

bash

# Create DRGoci network drg create \ --compartment-id $COMPARTMENT_ID \ --display-name "aws-interconnect-drg"# Attach DRG to VCNoci network drg-attachment create \ --drg-id $DRG_ID \ --vcn-id $VCN_ID \ --display-name "vcn-attachment"

Create a Customer Premises Equipment (CPE) object representing AWS:

bash

# Create CPE for AWS VPN endpointoci network cpe create \ --compartment-id $COMPARTMENT_ID \ --ip-address $AWS_VPN_PUBLIC_IP \ --display-name "aws-vpn-endpoint"

Create the IPSec connection:

bash

# Create IPSec connectionoci network ip-sec-connection create \ --compartment-id $COMPARTMENT_ID \ --cpe-id $CPE_ID \ --drg-id $DRG_ID \ --static-routes '["10.1.0.0/16"]' \ --display-name "oci-to-aws-vpn"

On AWS Side:

Create a Customer Gateway pointing to OCI:

bash

# Create Customer Gatewayaws ec2 create-customer-gateway \ --type ipsec.1 \ --public-ip $OCI_VPN_PUBLIC_IP \ --bgp-asn 65000# Create VPN Gatewayaws ec2 create-vpn-gateway \ --type ipsec.1# Attach to VPCaws ec2 attach-vpn-gateway \ --vpn-gateway-id $VGW_ID \ --vpc-id $VPC_ID# Create VPN Connectionaws ec2 create-vpn-connection \ --type ipsec.1 \ --customer-gateway-id $CGW_ID \ --vpn-gateway-id $VGW_ID \ --options '{"StaticRoutesOnly": true}'

Update route tables on both sides:

bash

# AWS: Add route to OCI CIDRaws ec2 create-route \ --route-table-id $ROUTE_TABLE_ID \ --destination-cidr-block 10.2.0.0/16 \ --gateway-id $VGW_ID# OCI: Add route to AWS CIDRoci network route-table update \ --rt-id $ROUTE_TABLE_ID \ --route-rules '[{ "destination": "10.1.0.0/16", "destinationType": "CIDR_BLOCK", "networkEntityId": "'$DRG_ID'" }]'

Option 2: Private Connectivity (Production Recommended)

For production workloads, you want dedicated private connectivity. This means OCI FastConnect paired with AWS Direct Connect, meeting at a common colocation facility.

The good news is that Oracle and AWS both have presence in major colocation providers like Equinix. The setup involves:

- Establishing FastConnect to your colocation

- Establishing Direct Connect to the same colocation

- Connecting them via a cross-connect in the facility

hcl

# Terraform for FastConnect virtual circuitresource "oci_core_virtual_circuit" "aws_interconnect" { compartment_id = var.compartment_id display_name = "aws-fastconnect" type = "PRIVATE" bandwidth_shape_name = "1 Gbps" cross_connect_mappings { customer_bgp_peering_ip = "169.254.100.1/30" oracle_bgp_peering_ip = "169.254.100.2/30" } customer_asn = "65001" gateway_id = oci_core_drg.main.id provider_name = "Equinix" region = "Dubai"}

hcl

# Terraform for AWS Direct Connectresource "aws_dx_connection" "oci_interconnect" { name = "oci-direct-connect" bandwidth = "1Gbps" location = "Equinix DX1" provider_name = "Equinix"}resource "aws_dx_private_virtual_interface" "oci" { connection_id = aws_dx_connection.oci_interconnect.id name = "oci-vif" vlan = 4094 address_family = "ipv4" bgp_asn = 65002 amazon_address = "169.254.100.5/30" customer_address = "169.254.100.6/30" dx_gateway_id = aws_dx_gateway.main.id}

Honestly, setting this up involves coordination with both cloud providers and the colocation facility. Budget 4-8 weeks for the physical connectivity and plan for redundancy from day one.

Database Connectivity from AWS to OCI

Now that we have network connectivity, let’s connect AWS applications to OCI databases.

Configuring Autonomous Database for External Access

First, enable private endpoint access for your Autonomous Database:

bash

# Update ADB to use private endpointoci db autonomous-database update \ --autonomous-database-id $ADB_ID \ --is-access-control-enabled true \ --whitelisted-ips '["10.1.0.0/16"]' \ # AWS VPC CIDR --is-mtls-connection-required false # Allow TLS without mTLS for simplicity

Get the connection string:

bash

oci db autonomous-database get \ --autonomous-database-id $ADB_ID \ --query 'data."connection-strings".profiles[?consumer=="LOW"].value | [0]'

Application Configuration on AWS

Here’s a practical Python example for connecting from AWS Lambda to OCI Autonomous Database:

python

# lambda_function.pyimport cx_Oracleimport osimport boto3from botocore.exceptions import ClientErrordef get_db_credentials(): """Retrieve database credentials from AWS Secrets Manager""" secret_name = "oci-adb-credentials" region_name = "us-east-1" session = boto3.session.Session() client = session.client( service_name='secretsmanager', region_name=region_name ) try: response = client.get_secret_value(SecretId=secret_name) return json.loads(response['SecretString']) except ClientError as e: raise edef handler(event, context): # Get credentials creds = get_db_credentials() # Connection string format for Autonomous DB dsn = """(description= (retry_count=20)(retry_delay=3) (address=(protocol=tcps)(port=1522) (host=adb.me-dubai-1.oraclecloud.com)) (connect_data=(service_name=xxx_atp_low.adb.oraclecloud.com)) (security=(ssl_server_dn_match=yes)))""" connection = cx_Oracle.connect( user=creds['username'], password=creds['password'], dsn=dsn, encoding="UTF-8" ) cursor = connection.cursor() cursor.execute("SELECT * FROM orders WHERE order_date = TRUNC(SYSDATE)") results = [] for row in cursor: results.append({ 'order_id': row[0], 'customer_id': row[1], 'amount': float(row[2]) }) cursor.close() connection.close() return { 'statusCode': 200, 'body': json.dumps(results) }

For containerized applications on EKS, use a connection pool:

python

# db_pool.pyimport cx_Oracleimport osclass OCIDatabasePool: _pool = None @classmethod def get_pool(cls): if cls._pool is None: cls._pool = cx_Oracle.SessionPool( user=os.environ['OCI_DB_USER'], password=os.environ['OCI_DB_PASSWORD'], dsn=os.environ['OCI_DB_DSN'], min=2, max=10, increment=1, encoding="UTF-8", threaded=True, getmode=cx_Oracle.SPOOL_ATTRVAL_WAIT ) return cls._pool @classmethod def get_connection(cls): return cls.get_pool().acquire() @classmethod def release_connection(cls, connection): cls.get_pool().release(connection)

Kubernetes deployment for the application:

yaml

# deployment.yamlapiVersion: apps/v1kind: Deploymentmetadata: name: order-service namespace: productionspec: replicas: 3 selector: matchLabels: app: order-service template: metadata: labels: app: order-service spec: containers: - name: order-service image: 123456789.dkr.ecr.us-east-1.amazonaws.com/order-service:v1.0 ports: - containerPort: 8080 env: - name: OCI_DB_USER valueFrom: secretKeyRef: name: oci-db-credentials key: username - name: OCI_DB_PASSWORD valueFrom: secretKeyRef: name: oci-db-credentials key: password - name: OCI_DB_DSN valueFrom: configMapKeyRef: name: oci-db-config key: dsn resources: requests: cpu: 250m memory: 512Mi limits: cpu: 1000m memory: 1Gi livenessProbe: httpGet: path: /health port: 8080 initialDelaySeconds: 30 periodSeconds: 10

Data Synchronization Between Clouds

Real multi-cloud architectures need data flowing between clouds. Here are practical patterns:

Pattern 1: Event-Driven Sync with Kafka

Use a managed Kafka service as the bridge:

python

# AWS Lambda producer - sends events to Kafkafrom kafka import KafkaProducerimport jsonproducer = KafkaProducer( bootstrap_servers=['kafka-broker-1:9092', 'kafka-broker-2:9092'], value_serializer=lambda v: json.dumps(v).encode('utf-8'), security_protocol='SASL_SSL', sasl_mechanism='PLAIN', sasl_plain_username=os.environ['KAFKA_USER'], sasl_plain_password=os.environ['KAFKA_PASSWORD'])def handler(event, context): # Process order and send to Kafka for OCI consumption order_data = process_order(event) producer.send( 'orders-topic', key=str(order_data['order_id']).encode(), value=order_data ) producer.flush() return {'statusCode': 200}

OCI side consumer using OCI Functions:

python

# OCI Function consumerimport ioimport jsonimport loggingimport cx_Oraclefrom kafka import KafkaConsumerdef handler(ctx, data: io.BytesIO = None): consumer = KafkaConsumer( 'orders-topic', bootstrap_servers=['kafka-broker-1:9092'], auto_offset_reset='earliest', enable_auto_commit=True, group_id='oci-order-processor', value_deserializer=lambda x: json.loads(x.decode('utf-8')) ) connection = get_adb_connection() cursor = connection.cursor() for message in consumer: order = message.value cursor.execute(""" MERGE INTO orders o USING (SELECT :order_id AS order_id FROM dual) src ON (o.order_id = src.order_id) WHEN MATCHED THEN UPDATE SET amount = :amount, status = :status, updated_at = SYSDATE WHEN NOT MATCHED THEN INSERT (order_id, customer_id, amount, status, created_at) VALUES (:order_id, :customer_id, :amount, :status, SYSDATE) """, order) connection.commit() cursor.close() connection.close()

Pattern 2: Scheduled Batch Sync

For less time-sensitive data, batch synchronization is simpler and more cost-effective:

python

# AWS Step Functions state machine for batch sync{ "Comment": "Sync data from AWS to OCI", "StartAt": "ExtractFromAWS", "States": { "ExtractFromAWS": { "Type": "Task", "Resource": "arn:aws:lambda:us-east-1:123456789:function:extract-data", "Next": "UploadToS3" }, "UploadToS3": { "Type": "Task", "Resource": "arn:aws:lambda:us-east-1:123456789:function:upload-to-s3", "Next": "CopyToOCI" }, "CopyToOCI": { "Type": "Task", "Resource": "arn:aws:lambda:us-east-1:123456789:function:copy-to-oci-bucket", "Next": "LoadToADB" }, "LoadToADB": { "Type": "Task", "Resource": "arn:aws:lambda:us-east-1:123456789:function:load-to-adb", "End": true } }}

The Lambda function to copy data to OCI Object Storage:

python

# copy_to_oci.pyimport boto3import ociimport osdef handler(event, context): # Get file from S3 s3 = boto3.client('s3') s3_object = s3.get_object( Bucket=event['bucket'], Key=event['key'] ) file_content = s3_object['Body'].read() # Upload to OCI Object Storage config = oci.config.from_file() object_storage = oci.object_storage.ObjectStorageClient(config) namespace = object_storage.get_namespace().data object_storage.put_object( namespace_name=namespace, bucket_name="data-sync-bucket", object_name=event['key'], put_object_body=file_content ) return { 'oci_bucket': 'data-sync-bucket', 'object_name': event['key'] }

Load into Autonomous Database using DBMS_CLOUD:

sql

-- Create credential for OCI Object Storage accessBEGIN DBMS_CLOUD.CREATE_CREDENTIAL( credential_name => 'OCI_CRED', username => 'your_oci_username', password => 'your_auth_token' );END;/-- Load data from Object StorageBEGIN DBMS_CLOUD.COPY_DATA( table_name => 'ORDERS_STAGING', credential_name => 'OCI_CRED', file_uri_list => 'https://objectstorage.me-dubai-1.oraclecloud.com/n/namespace/b/data-sync-bucket/o/orders_*.csv', format => JSON_OBJECT( 'type' VALUE 'CSV', 'skipheaders' VALUE '1', 'dateformat' VALUE 'YYYY-MM-DD' ) );END;/-- Merge staging into productionMERGE INTO orders oUSING orders_staging sON (o.order_id = s.order_id)WHEN MATCHED THEN UPDATE SET o.amount = s.amount, o.status = s.statusWHEN NOT MATCHED THEN INSERT (order_id, customer_id, amount, status) VALUES (s.order_id, s.customer_id, s.amount, s.status);

Identity Federation

Managing identities across clouds is a headache unless you set up proper federation. Here’s how to enable SSO between AWS and OCI using a common identity provider.

Using Azure AD as Common IdP (Yes, a Third Cloud)

This is actually quite common. Many enterprises use Azure AD for identity even if their workloads run elsewhere.

Configure OCI to Trust Azure AD:

bash

# Create Identity Provider in OCIoci iam identity-provider create-saml2-identity-provider \ --compartment-id $TENANCY_ID \ --name "AzureAD-Federation" \ --description "Federation with Azure AD" \ --product-type "IDCS" \ --metadata-url "https://login.microsoftonline.com/$TENANT_ID/federationmetadata/2007-06/federationmetadata.xml"

Configure AWS to Trust Azure AD:

bash

# Create SAML provider in AWSaws iam create-saml-provider \ --saml-metadata-document file://azure-ad-metadata.xml \ --name AzureAD-Federation# Create role for federated usersaws iam create-role \ --role-name AzureAD-Admins \ --assume-role-policy-document '{ "Version": "2012-10-17", "Statement": [{ "Effect": "Allow", "Principal": {"Federated": "arn:aws:iam::123456789:saml-provider/AzureAD-Federation"}, "Action": "sts:AssumeRoleWithSAML", "Condition": { "StringEquals": { "SAML:aud": "https://signin.aws.amazon.com/saml" } } }] }'

Now your team can use the same Azure AD credentials to access both clouds.

Monitoring Across Clouds

You need unified observability. Here’s a practical approach using Grafana as the common dashboard:

yaml

# docker-compose.yml for centralized Grafanaversion: '3.8'services: grafana: image: grafana/grafana:latest ports: - "3000:3000" volumes: - grafana-data:/var/lib/grafana - ./provisioning:/etc/grafana/provisioning environment: - GF_SECURITY_ADMIN_PASSWORD=secure_password - GF_INSTALL_PLUGINS=oci-metrics-datasourcevolumes: grafana-data:

Configure data sources:

yaml

# provisioning/datasources/datasources.yamlapiVersion: 1datasources: - name: AWS-CloudWatch type: cloudwatch access: proxy jsonData: authType: keys defaultRegion: us-east-1 secureJsonData: accessKey: ${AWS_ACCESS_KEY} secretKey: ${AWS_SECRET_KEY} - name: OCI-Monitoring type: oci-metrics-datasource access: proxy jsonData: tenancyOCID: ${OCI_TENANCY_OCID} userOCID: ${OCI_USER_OCID} region: me-dubai-1 secureJsonData: privateKey: ${OCI_PRIVATE_KEY}

Create a unified dashboard that shows both clouds:

json

{ "title": "Multi-Cloud Overview", "panels": [ { "title": "AWS EKS CPU Utilization", "datasource": "AWS-CloudWatch", "targets": [{ "namespace": "AWS/EKS", "metricName": "node_cpu_utilization", "dimensions": {"ClusterName": "production"} }] }, { "title": "OCI Autonomous DB Sessions", "datasource": "OCI-Monitoring", "targets": [{ "namespace": "oci_autonomous_database", "metric": "CurrentOpenSessionCount", "resourceGroup": "production-adb" }] }, { "title": "Cross-Cloud Latency", "datasource": "Prometheus", "targets": [{ "expr": "histogram_quantile(0.95, rate(cross_cloud_request_duration_seconds_bucket[5m]))" }] } ]}

Cost Management

Multi-cloud cost visibility is challenging. Here’s a practical approach:

python

# cost_aggregator.pyimport boto3import ocifrom datetime import datetime, timedeltadef get_aws_costs(start_date, end_date): client = boto3.client('ce') response = client.get_cost_and_usage( TimePeriod={ 'Start': start_date.strftime('%Y-%m-%d'), 'End': end_date.strftime('%Y-%m-%d') }, Granularity='DAILY', Metrics=['UnblendedCost'], GroupBy=[{'Type': 'DIMENSION', 'Key': 'SERVICE'}] ) return response['ResultsByTime']def get_oci_costs(start_date, end_date): config = oci.config.from_file() usage_api = oci.usage_api.UsageapiClient(config) response = usage_api.request_summarized_usages( request_summarized_usages_details=oci.usage_api.models.RequestSummarizedUsagesDetails( tenant_id=config['tenancy'], time_usage_started=start_date, time_usage_ended=end_date, granularity="DAILY", group_by=["service"] ) ) return response.data.itemsdef generate_report(): end_date = datetime.now() start_date = end_date - timedelta(days=30) aws_costs = get_aws_costs(start_date, end_date) oci_costs = get_oci_costs(start_date, end_date) total_aws = sum(float(day['Total']['UnblendedCost']['Amount']) for day in aws_costs) total_oci = sum(item.computed_amount for item in oci_costs) print(f"30-Day Multi-Cloud Cost Summary") print(f"{'='*40}") print(f"AWS Total: ${total_aws:,.2f}") print(f"OCI Total: ${total_oci:,.2f}") print(f"Combined Total: ${total_aws + total_oci:,.2f}")

Lessons Learned

After running multi-cloud architectures for several years, here’s what I’ve learned:

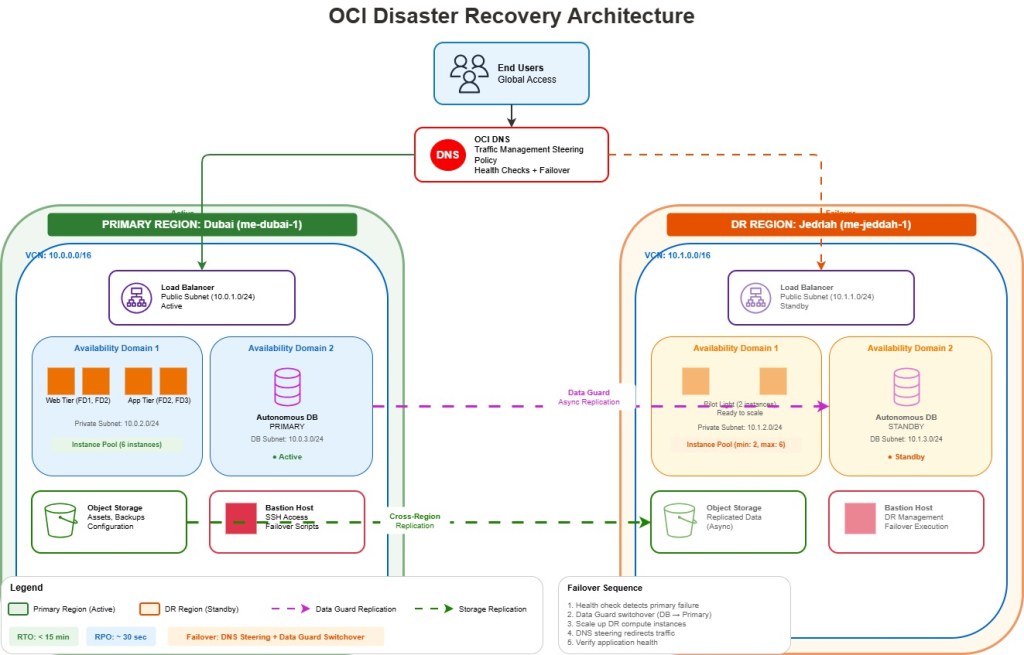

Network is everything. Invest in proper connectivity upfront. The $500/month you save on VPN versus dedicated connectivity will cost you thousands in debugging performance issues.

Pick one cloud for each workload type. Don’t run the same thing in both clouds. Use OCI for Oracle databases, AWS for its unique services. Avoid the temptation to replicate everything everywhere.

Standardize your tooling. Terraform works on both clouds. Use it. Same for monitoring, logging, and CI/CD. The more consistent your tooling, the less your team has to context-switch.

Document your data flows. Know exactly what data goes where and why. This will save you during security audits and incident response.

Test cross-cloud failures. What happens when the VPN goes down? Can your application degrade gracefully? Find out before your customers do.

Conclusion

Multi-cloud between OCI and AWS isn’t simple, but it’s absolutely achievable. The key is having clear reasons for using each cloud, solid networking fundamentals, and consistent operational practices.

Start small. Connect one application to one database across clouds. Get that working reliably before expanding. Build your team’s confidence and expertise incrementally.

The organizations that succeed with multi-cloud are the ones that treat it as an architectural choice, not a checkbox. They know exactly why they need both clouds and have designed their systems accordingly.

Regards,

Osama