Let me ask you something. Where are your database passwords right now? Your API keys? Your TLS certificates?

If you’re like most teams I’ve worked with, the honest answer is “scattered everywhere.” Some are in environment variables. Some are in Kubernetes secrets (base64 encoded, which isn’t encryption by the way). A few are probably still hardcoded in configuration files that someone committed to Git three years ago.

I’m not judging. We’ve all been there. But as your infrastructure grows across multiple clouds, this approach becomes a ticking time bomb. One leaked credential can compromise everything.

In this article, I’ll show you how to build a centralized secrets management strategy using HashiCorp Vault. We’ll deploy it properly, integrate it with AWS, Azure, and GCP, and set up dynamic secrets that rotate automatically. No more shared passwords. No more “who has access to what” mysteries.

Why Vault? Why Now?

Before we dive into implementation, let me explain why I recommend Vault over cloud-native solutions like AWS Secrets Manager, Azure Key Vault, or GCP Secret Manager.

Don’t get me wrong. Those services are excellent. If you’re running entirely on one cloud, they might be all you need. But here’s the reality for most organizations:

You have workloads on AWS. Your data team uses GCP for BigQuery. Your enterprise applications run on Azure. Maybe you still have some on-premises systems. And you need a consistent way to manage secrets across all of them.

Vault gives you that single control plane. One audit log. One policy engine. One place to rotate credentials. And it integrates with everything.

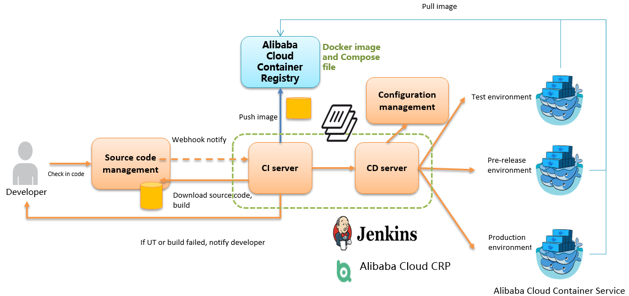

Architecture Overview

Here’s what we’re building:

The key principle here is that applications never store long-lived credentials. Instead, they authenticate to Vault and receive short-lived, automatically rotated credentials for the specific resources they need.

Building a Multi-Cloud Secrets Management Strategy with HashiCorp Vault

Let me ask you something. Where are your database passwords right now? Your API keys? Your TLS certificates?

If you’re like most teams I’ve worked with, the honest answer is “scattered everywhere.” Some are in environment variables. Some are in Kubernetes secrets (base64 encoded, which isn’t encryption by the way). A few are probably still hardcoded in configuration files that someone committed to Git three years ago.

I’m not judging. We’ve all been there. But as your infrastructure grows across multiple clouds, this approach becomes a ticking time bomb. One leaked credential can compromise everything.

In this article, I’ll show you how to build a centralized secrets management strategy using HashiCorp Vault. We’ll deploy it properly, integrate it with AWS, Azure, and GCP, and set up dynamic secrets that rotate automatically. No more shared passwords. No more “who has access to what” mysteries.

Why Vault? Why Now?

Before we dive into implementation, let me explain why I recommend Vault over cloud-native solutions like AWS Secrets Manager, Azure Key Vault, or GCP Secret Manager.

Don’t get me wrong. Those services are excellent. If you’re running entirely on one cloud, they might be all you need. But here’s the reality for most organizations:

You have workloads on AWS. Your data team uses GCP for BigQuery. Your enterprise applications run on Azure. Maybe you still have some on-premises systems. And you need a consistent way to manage secrets across all of them.

Vault gives you that single control plane. One audit log. One policy engine. One place to rotate credentials. And it integrates with everything.

Architecture Overview

Here’s what we’re building:

The key principle here is that applications never store long-lived credentials. Instead, they authenticate to Vault and receive short-lived, automatically rotated credentials for the specific resources they need.

Step 1: Deploy Vault on Kubernetes

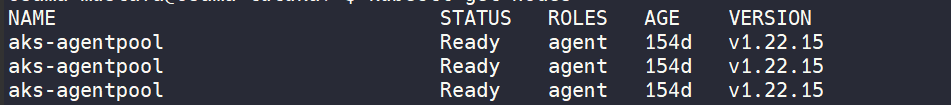

I prefer running Vault on Kubernetes because it gives you high availability, easy scaling, and integrates beautifully with your existing workloads. We’ll use the official Helm chart.

Prerequisites

You’ll need a Kubernetes cluster. Any managed Kubernetes service works: EKS, AKS, GKE, or even OKE. For this guide, I’ll use commands that work across all of them.

Create the Namespace and Storage

bash

kubectl create namespace vault# Create storage class for Vault data# This example uses AWS EBS, adjust for your cloudcat <<EOF | kubectl apply -f -apiVersion: storage.k8s.io/v1kind: StorageClassmetadata: name: vault-storageprovisioner: ebs.csi.aws.comparameters: type: gp3 encrypted: "true"reclaimPolicy: RetainvolumeBindingMode: WaitForFirstConsumerEOF

Configure Vault Helm Values

yaml

# vault-values.yamlglobal: enabled: true tlsDisable: falseinjector: enabled: true replicas: 2 resources: requests: memory: 256Mi cpu: 250m limits: memory: 512Mi cpu: 500mserver: enabled: true # Run 3 replicas for high availability ha: enabled: true replicas: 3 # Use Raft for integrated storage raft: enabled: true setNodeId: true config: | ui = true listener "tcp" { tls_disable = false address = "[::]:8200" cluster_address = "[::]:8201" tls_cert_file = "/vault/userconfig/vault-tls/tls.crt" tls_key_file = "/vault/userconfig/vault-tls/tls.key" } storage "raft" { path = "/vault/data" retry_join { leader_api_addr = "https://vault-0.vault-internal:8200" leader_ca_cert_file = "/vault/userconfig/vault-tls/ca.crt" } retry_join { leader_api_addr = "https://vault-1.vault-internal:8200" leader_ca_cert_file = "/vault/userconfig/vault-tls/ca.crt" } retry_join { leader_api_addr = "https://vault-2.vault-internal:8200" leader_ca_cert_file = "/vault/userconfig/vault-tls/ca.crt" } } service_registration "kubernetes" {} seal "awskms" { region = "us-east-1" kms_key_id = "alias/vault-unseal-key" } resources: requests: memory: 1Gi cpu: 500m limits: memory: 2Gi cpu: 2000m dataStorage: enabled: true size: 20Gi storageClass: vault-storage auditStorage: enabled: true size: 10Gi storageClass: vault-storage # Service account for cloud integrations serviceAccount: create: true annotations: eks.amazonaws.com/role-arn: arn:aws:iam::ACCOUNT_ID:role/vault-server-roleui: enabled: true serviceType: LoadBalancer annotations: service.beta.kubernetes.io/aws-load-balancer-type: nlb service.beta.kubernetes.io/aws-load-balancer-internal: "true"

Generate TLS Certificates

Vault should always use TLS. Here’s how to create certificates using cert-manager:

yaml

# vault-certificate.yamlapiVersion: cert-manager.io/v1kind: Certificatemetadata: name: vault-tls namespace: vaultspec: secretName: vault-tls duration: 8760h # 1 year renewBefore: 720h # 30 days subject: organizations: - YourCompany commonName: vault.vault.svc.cluster.local dnsNames: - vault - vault.vault - vault.vault.svc - vault.vault.svc.cluster.local - vault-0.vault-internal - vault-1.vault-internal - vault-2.vault-internal - "*.vault-internal" ipAddresses: - 127.0.0.1 issuerRef: name: cluster-issuer kind: ClusterIssuer

Install Vault

bash

helm repo add hashicorp https://helm.releases.hashicorp.comhelm repo updatehelm install vault hashicorp/vault \ --namespace vault \ --values vault-values.yaml \ --version 0.27.0

Initialize and Unseal

This is a one-time operation. Keep these keys safe. I mean really safe. Like offline, in multiple secure locations.

bash

# Initialize Vaultkubectl exec -n vault vault-0 -- vault operator init \ -key-shares=5 \ -key-threshold=3 \ -format=json > vault-init.json# The output contains your unseal keys and root token# Store these securely!# If not using auto-unseal, you'd need to unseal manually:# kubectl exec -n vault vault-0 -- vault operator unseal <key1># kubectl exec -n vault vault-0 -- vault operator unseal <key2># kubectl exec -n vault vault-0 -- vault operator unseal <key3># With AWS KMS auto-unseal configured, Vault unseals automatically

Step 2: Configure Authentication Methods

Now we need to tell Vault how applications will authenticate. This is where it gets interesting.

Kubernetes Authentication

Applications running in Kubernetes can authenticate using their service account tokens. No passwords needed.

bash

# Enable Kubernetes authvault auth enable kubernetes# Configure it to trust our clustervault write auth/kubernetes/config \ kubernetes_host="https://$KUBERNETES_PORT_443_TCP_ADDR:443" \ token_reviewer_jwt="$(cat /var/run/secrets/kubernetes.io/serviceaccount/token)" \ kubernetes_ca_cert=@/var/run/secrets/kubernetes.io/serviceaccount/ca.crt \ issuer="https://kubernetes.default.svc.cluster.local"

AWS IAM Authentication

For workloads running on EC2, Lambda, or ECS, they can authenticate using their IAM roles.

bash

# Enable AWS authvault auth enable aws# Configure AWS credentials for Vault to verify requestsvault write auth/aws/config/client \ secret_key=$AWS_SECRET_KEY \ access_key=$AWS_ACCESS_KEY# Create a role that EC2 instances can usevault write auth/aws/role/ec2-app-role \ auth_type=iam \ bound_iam_principal_arn="arn:aws:iam::ACCOUNT_ID:role/app-server-role" \ policies=app-policy \ ttl=1h

Azure Authentication

For Azure workloads using Managed Identities:

bash

# Enable Azure authvault auth enable azure# Configure Azurevault write auth/azure/config \ tenant_id=$AZURE_TENANT_ID \ resource="https://management.azure.com/" \ client_id=$AZURE_CLIENT_ID \ client_secret=$AZURE_CLIENT_SECRET# Create a role for Azure VMsvault write auth/azure/role/azure-app-role \ policies=app-policy \ bound_subscription_ids=$AZURE_SUBSCRIPTION_ID \ bound_resource_groups=production-rg \ ttl=1h

GCP Authentication

For GCP workloads using service accounts:

bash

# Enable GCP authvault auth enable gcp# Configure GCPvault write auth/gcp/config \ credentials=@gcp-credentials.json# Create a role for GCE instancesvault write auth/gcp/role/gce-app-role \ type="gce" \ policies=app-policy \ bound_projects="my-project-id" \ bound_zones="us-central1-a,us-central1-b" \ ttl=1h

Step 3: Set Up Dynamic Secrets

Here’s where the magic happens. Instead of storing static database passwords, Vault can generate unique credentials on demand and revoke them automatically when they expire.

Dynamic AWS Credentials

bash

# Enable AWS secrets enginevault secrets enable aws# Configure root credentials (Vault uses these to create dynamic creds)vault write aws/config/root \ access_key=$AWS_ACCESS_KEY \ secret_key=$AWS_SECRET_KEY \ region=us-east-1# Create a role that generates S3 read-only credentialsvault write aws/roles/s3-reader \ credential_type=iam_user \ policy_document=-<<EOF{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "s3:GetObject", "s3:ListBucket" ], "Resource": [ "arn:aws:s3:::my-bucket", "arn:aws:s3:::my-bucket/*" ] } ]}EOF# Now any authenticated client can get temporary AWS credentialsvault read aws/creds/s3-reader# Returns:# access_key AKIA...# secret_key xyz123...# lease_duration 1h# These credentials will be automatically revoked after 1 hour

Dynamic Database Credentials

This is probably my favorite feature. Every time an application needs to connect to a database, it gets a unique username and password that only it knows.

bash

# Enable database secrets enginevault secrets enable database# Configure PostgreSQL connectionvault write database/config/production-postgres \ plugin_name=postgresql-database-plugin \ allowed_roles="app-readonly,app-readwrite" \ connection_url="postgresql://{{username}}:{{password}}@db.example.com:5432/appdb?sslmode=require" \ username="vault_admin" \ password="vault_admin_password"# Create a read-only rolevault write database/roles/app-readonly \ db_name=production-postgres \ creation_statements="CREATE ROLE \"{{name}}\" WITH LOGIN PASSWORD '{{password}}' VALID UNTIL '{{expiration}}'; \ GRANT SELECT ON ALL TABLES IN SCHEMA public TO \"{{name}}\";" \ revocation_statements="DROP ROLE IF EXISTS \"{{name}}\";" \ default_ttl="1h" \ max_ttl="24h"# Create a read-write rolevault write database/roles/app-readwrite \ db_name=production-postgres \ creation_statements="CREATE ROLE \"{{name}}\" WITH LOGIN PASSWORD '{{password}}' VALID UNTIL '{{expiration}}'; \ GRANT SELECT, INSERT, UPDATE, DELETE ON ALL TABLES IN SCHEMA public TO \"{{name}}\";" \ revocation_statements="DROP ROLE IF EXISTS \"{{name}}\";" \ default_ttl="1h" \ max_ttl="24h"

Now when your application requests credentials:

bash

vault read database/creds/app-readonly# Returns:# username v-kubernetes-app-readonly-abc123# password A1B2C3D4E5F6...# lease_duration 1h

Every request gets a different username and password. If credentials are compromised, they expire automatically. And you have a complete audit trail of who accessed what, when.

Dynamic Azure Credentials

bash

# Enable Azure secrets enginevault secrets enable azure# Configure Azurevault write azure/config \ subscription_id=$AZURE_SUBSCRIPTION_ID \ tenant_id=$AZURE_TENANT_ID \ client_id=$AZURE_CLIENT_ID \ client_secret=$AZURE_CLIENT_SECRET# Create a role that generates Azure Service Principalsvault write azure/roles/contributor \ ttl=1h \ azure_roles=-<<EOF[ { "role_name": "Contributor", "scope": "/subscriptions/$AZURE_SUBSCRIPTION_ID/resourceGroups/production-rg" }]EOF

Step 4: Application Integration

Let’s see how applications actually use Vault. I’ll show you several patterns.

Pattern 1: Vault Agent Sidecar (Kubernetes)

This is my recommended approach for Kubernetes. Vault Agent runs alongside your application and handles authentication and secret retrieval automatically.

yaml

# deployment.yamlapiVersion: apps/v1kind: Deploymentmetadata: name: my-appspec: template: metadata: annotations: # These annotations tell Vault Agent what to do vault.hashicorp.com/agent-inject: "true" vault.hashicorp.com/role: "my-app-role" vault.hashicorp.com/agent-inject-secret-db-creds: "database/creds/app-readonly" vault.hashicorp.com/agent-inject-template-db-creds: | {{- with secret "database/creds/app-readonly" -}} export DB_USERNAME="{{ .Data.username }}" export DB_PASSWORD="{{ .Data.password }}" {{- end }} spec: serviceAccountName: my-app containers: - name: my-app image: my-app:latest command: ["/bin/sh", "-c"] args: - source /vault/secrets/db-creds && ./start-app.sh

When this pod starts, Vault Agent automatically:

- Authenticates to Vault using the Kubernetes service account

- Retrieves database credentials

- Writes them to

/vault/secrets/db-creds - Renews the credentials before they expire

- Updates the file when credentials change

Your application just reads from a file. It doesn’t need to know anything about Vault.

Pattern 2: Direct SDK Integration

For applications that need more control, you can use the Vault SDK directly:

python

# Python exampleimport hvacimport osdef get_vault_client(): """Create Vault client using Kubernetes auth.""" client = hvac.Client(url=os.environ['VAULT_ADDR']) # Read the service account token with open('/var/run/secrets/kubernetes.io/serviceaccount/token') as f: jwt = f.read() # Authenticate to Vault client.auth.kubernetes.login( role='my-app-role', jwt=jwt, mount_point='kubernetes' ) return clientdef get_database_credentials(): """Get dynamic database credentials.""" client = get_vault_client() # Request new database credentials response = client.secrets.database.generate_credentials( name='app-readonly', mount_point='database' ) return { 'username': response['data']['username'], 'password': response['data']['password'], 'lease_id': response['lease_id'], 'lease_duration': response['lease_duration'] }def connect_to_database(): """Connect to database with dynamic credentials.""" creds = get_database_credentials() connection = psycopg2.connect( host='db.example.com', database='appdb', user=creds['username'], password=creds['password'] ) return connection

Pattern 3: External Secrets Operator

If you prefer Kubernetes-native secrets, use External Secrets Operator to sync Vault secrets to Kubernetes:

yaml

# external-secret.yamlapiVersion: external-secrets.io/v1beta1kind: ExternalSecretmetadata: name: app-secretsspec: refreshInterval: 1h secretStoreRef: kind: ClusterSecretStore name: vault-backend target: name: app-secrets creationPolicy: Owner data: - secretKey: api-key remoteRef: key: secret/data/app/api-key property: value - secretKey: db-password remoteRef: key: secret/data/app/database property: password

Step 5: Policies and Access Control

Vault policies determine who can access what. Be specific and follow the principle of least privilege.

hcl

# app-policy.hcl# Allow reading dynamic database credentialspath "database/creds/app-readonly" { capabilities = ["read"]}# Allow reading application secretspath "secret/data/app/*" { capabilities = ["read", "list"]}# Deny access to admin pathspath "sys/*" { capabilities = ["deny"]}# Allow the app to renew its own tokenpath "auth/token/renew-self" { capabilities = ["update"]}

Apply the policy:

bash

vault policy write app-policy app-policy.hcl# Create a Kubernetes auth role that uses this policyvault write auth/kubernetes/role/my-app-role \ bound_service_account_names=my-app \ bound_service_account_namespaces=production \ policies=app-policy \ ttl=1h

Step 6: Monitoring and Audit

You need visibility into who’s accessing secrets. Enable audit logging:

bash

# Enable file audit devicevault audit enable file file_path=/vault/audit/vault-audit.log# Enable syslog for centralized loggingvault audit enable syslog tag="vault" facility="AUTH"

For monitoring, Vault exposes Prometheus metrics:

yaml

# ServiceMonitor for PrometheusapiVersion: monitoring.coreos.com/v1kind: ServiceMonitormetadata: name: vault namespace: vaultspec: selector: matchLabels: app.kubernetes.io/name: vault endpoints: - port: http path: /v1/sys/metrics params: format: ["prometheus"] scheme: https tlsConfig: insecureSkipVerify: true

Key metrics to alert on:

yaml

# Prometheus alerting rulesgroups:- name: vault rules: - alert: VaultSealed expr: vault_core_unsealed == 0 for: 1m labels: severity: critical annotations: summary: "Vault is sealed" description: "Vault instance {{ $labels.instance }} is sealed and unable to serve requests" - alert: VaultTooManyPendingTokens expr: vault_token_count > 10000 for: 5m labels: severity: warning annotations: summary: "Too many Vault tokens" description: "Vault has {{ $value }} active tokens. Consider reducing TTLs." - alert: VaultLeadershipLost expr: increase(vault_core_leadership_lost_count[5m]) > 0 labels: severity: warning annotations: summary: "Vault leadership changes detected"

Common Mistakes to Avoid

Let me save you some headaches by sharing mistakes I’ve seen (and made):

Mistake 1: Using the root token for applications

The root token has unlimited access. Create specific policies and tokens for each application.

Mistake 2: Not rotating the root token

After initial setup, generate a new root token and revoke the original:

bash

vault operator generate-root -init# Follow the process to generate a new root tokenvault token revoke <old-root-token>

Mistake 3: Setting TTLs too long

Short TTLs mean compromised credentials are valid for less time. Start with 1 hour and adjust based on your needs.

Mistake 4: Not testing recovery procedures

Practice unsealing Vault. Practice recovering from backup. Do it regularly. The worst time to learn is during an actual incident.

Mistake 5: Storing unseal keys together

Distribute unseal keys to different people in different locations. Use a threshold scheme (3 of 5) so no single person can unseal Vault.

Regards, Enjoy the Cloud

Osama